As data centers demand higher storage density and computational efficiency, traditional U.2 interfaces are increasingly constrained by space and scalability. In this context, MCIO (Multi-Channel Input/Output)—a high-density, multi-lane physical interconnect standard—is being widely adopted across Intel, AMD, and other mainstream server platforms.

1. What Is MCIO?

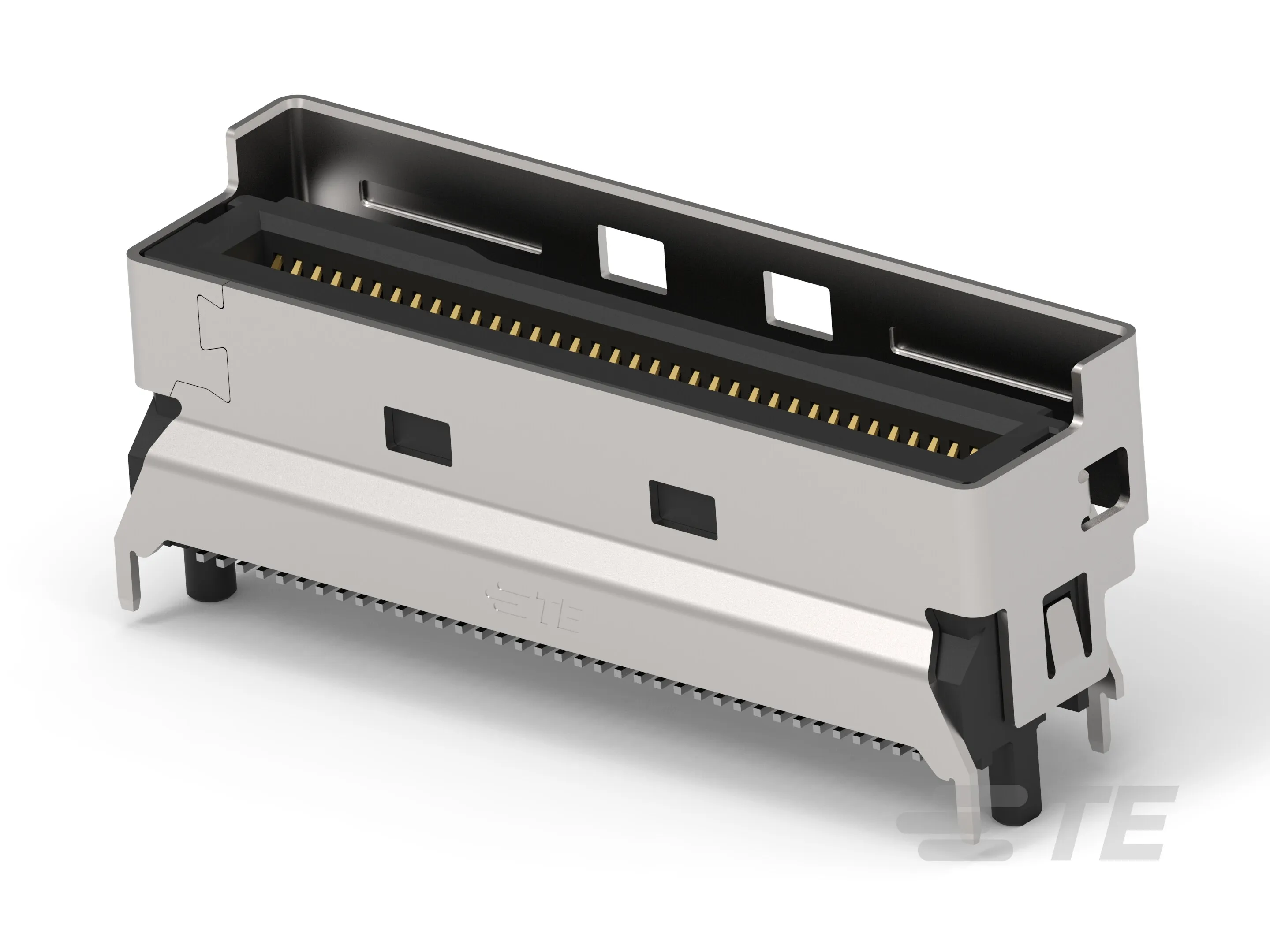

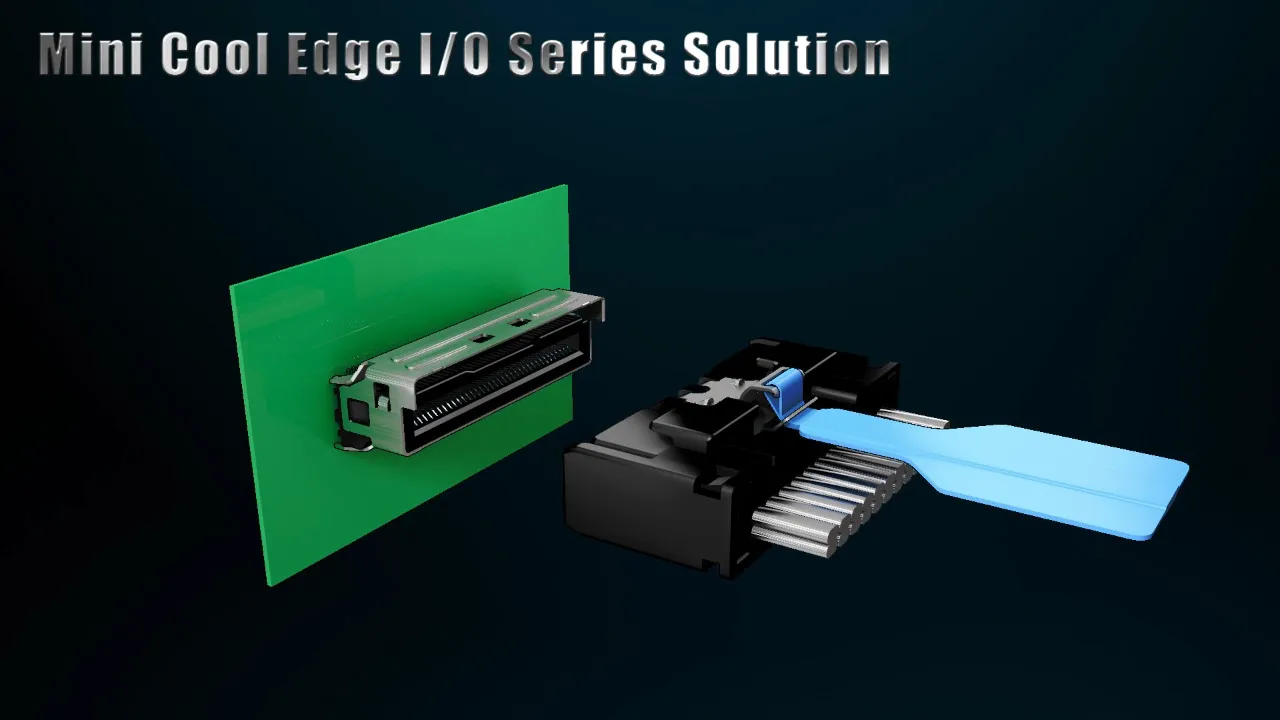

MCIO is not a communication protocol but a physical layer specification defined by SFF-TA-1045. Using a compact connector (typically 74-pin or 98-pin), it supports up to 16 PCIe lanes, enabling simultaneous connection to multiple NVMe SSDs or accelerator devices.

2. Key Application Scenarios

- Enterprise NVMe Storage Scaling: A single MCIO port can drive 2–4 E1.S SSDs, significantly increasing storage density per server node.

- AI Training Server Interconnect: Enables direct GPU-to-smart-SSD links, bypassing CPU bottlenecks and reducing latency.

- Edge Computing Nodes: Its compact form factor meets the dual demands of space efficiency and performance in micro-data centers.

3. Core Technical Specifications

| Parameter | Specification |

|---|---|

| Physical Interface | MCIO connector per SFF-TA-1045 (74-pin / 98-pin) |

| Supported Protocols | PCIe Gen4 / Gen5 + NVMe |

| Max Lane Count | 16 lanes (4× x4 configuration) |

| Data Rate per Lane | Up to 32 GT/s (PCIe Gen5) |

| Compatibility | Backward compatible with U.2/U.3 via adapter boards |

4. Roadmap and Future Trends

Since its debut with Intel’s Ice Lake-SP platform in 2020, MCIO has entered a phase of ecosystem maturity:

- 2023–2024: Full adoption by AMD EPYC platforms, driving industry-wide standardization.

- 2025+ : With the rise of CXL (Compute Express Link), MCIO is expected to integrate memory pooling capabilities, becoming a foundational physical layer for heterogeneous computing.

Need MCIO Cables or Backplane Solutions?

YZMU offers custom high-speed harnesses for MCIO, Oculink, and SlimSAS, with full PCIe 5.0 signal integrity validation and sample testing support.

Contact Our Engineers